.png) Get Started

Get Started

Customer Service AI Chatbot: The Complete 2026 Guide

Your moderators are answering the same questions all day. One thread asks how to connect a wallet. Another asks why the app won’t sync. A private ticket turns into a public Discord debate because the first reply missed context. Meanwhile, your team is trying to keep response times reasonable across Discord, Telegram, Slack, and web chat without burning out.

That’s where a customer service ai chatbot stops being a nice-to-have and becomes part of the operating model. The pressure is already visible in the market. The chatbot market is projected to grow at a 23.1% annual rate from 2024 to 2032, and AI-powered chatbots have already delivered a 70% increase in customer satisfaction rates through speed and round-the-clock support, according to Techjury’s marketing AI research.

The important part isn’t the hype. It’s the fit. In community-heavy support environments, the true win is simple: let AI absorb repetitive, low-risk questions so human agents can handle edge cases, emotionally charged issues, and anything that requires judgment.

Table of Contents

- The work that drains teams first

- What it does wellWhere it falls short

- From messy language to useful intent

- Why the knowledge base matters more than the model

- SaaS teams

- Gaming communities

- Web3 and crypto support

- Operational metrics

- Experience metrics

- Build the bot on clean knowledge

- Design handoffs before launch

- Train the team, not just the bot

- How to Choose the Right AI Chatbot Platform

- The Future of Support is Collaborative AI

Your Support Team Is Drowning in Tickets

The pattern is predictable. A product update goes live, your Discord gets flooded, Telegram pings start stacking up, and moderators spend half their shift retyping answers that already exist in your docs. Support quality drops first in the small ways. Slower replies. Missed nuances. Inconsistent answers. Then burnout follows.

In fast-growing communities, the problem isn’t only ticket count. It’s context switching. Public channels, private tickets, product docs, release notes, and moderator judgment all have to line up in real time. Traditional support playbooks were built for email and web forms. They don’t hold up well when users ask fragmented questions in slang, post screenshots without explanation, or revive an old thread with a new issue.

The work that drains teams first

A support queue usually breaks down into three kinds of work:

- Repetitive questions: password resets, onboarding steps, pricing clarifications, basic troubleshooting

- Context-heavy questions: issues that depend on account history, prior replies, or product configuration

- Sensitive escalations: billing disputes, trust issues, harassment reports, frustrated customers

A customer service ai chatbot is most useful on the first category and selectively useful on the second. It should not be your front line for the third unless it can route quickly and preserve context.

Practical rule: If a human agent copies and pastes the same answer multiple times a day, that answer belongs in your bot workflow.

The business case is no longer hard to make. Buyers now expect immediate responses, and support leaders need ways to scale without hiring linearly. The teams that do this well don’t treat AI like a magic agent. They treat it like a high-speed triage layer that protects the human team’s time.

What Is a Customer Service AI Chatbot

A customer service ai chatbot is a support system that can understand a user’s question in natural language, look for the right answer, and reply instantly or route the issue to a human when needed. That’s very different from the old decision-tree bots that forced users through rigid menus.

A simple way to think about it is this. A rule-based bot is like a phone tree. Press 1, press 2, start over when your issue doesn’t fit. An AI chatbot is closer to a support librarian. It takes a messy question, figures out what the person likely means, and checks the right source material before responding.

What it does well

Modern chatbots are strong at work that follows repeatable patterns. They can provide 24/7 support, cut waiting time, and handle repetitive queries automatically, which lowers operational costs. That’s one reason the hybrid model has become standard for scalable support, combining automation with human expertise, as described in QSS Technosoft’s overview of AI chatbots and support operations.

That matters most in channels where volume is uneven. Community support teams often don’t get a smooth queue. They get bursts. Launch days, incident windows, feature drops, billing cycles, and market events can all create sudden spikes.

Where it falls short

The limitations are just as important as the benefits.

- Novel issues: If the answer doesn’t exist in your knowledge base, the bot may struggle or answer too vaguely.

- Emotional nuance: Bots can detect frustration signals, but they don’t replace human judgment in tense conversations.

- Policy exceptions: Refund disputes, moderation appeals, and account-specific edge cases often need a person.

- Bad source material: If your docs are outdated, the bot will confidently repeat outdated guidance.

Good AI support feels fast and accurate. Bad AI support feels like being trapped in a loop with a machine that doesn’t understand the stakes.

The strongest implementations use the chatbot for intake, triage, repetitive resolution, and basic guidance. Humans still own trust, exceptions, and complicated problem solving. That division of labor is what keeps the experience efficient without making it feel cold.

How Modern AI Chatbots Actually Work

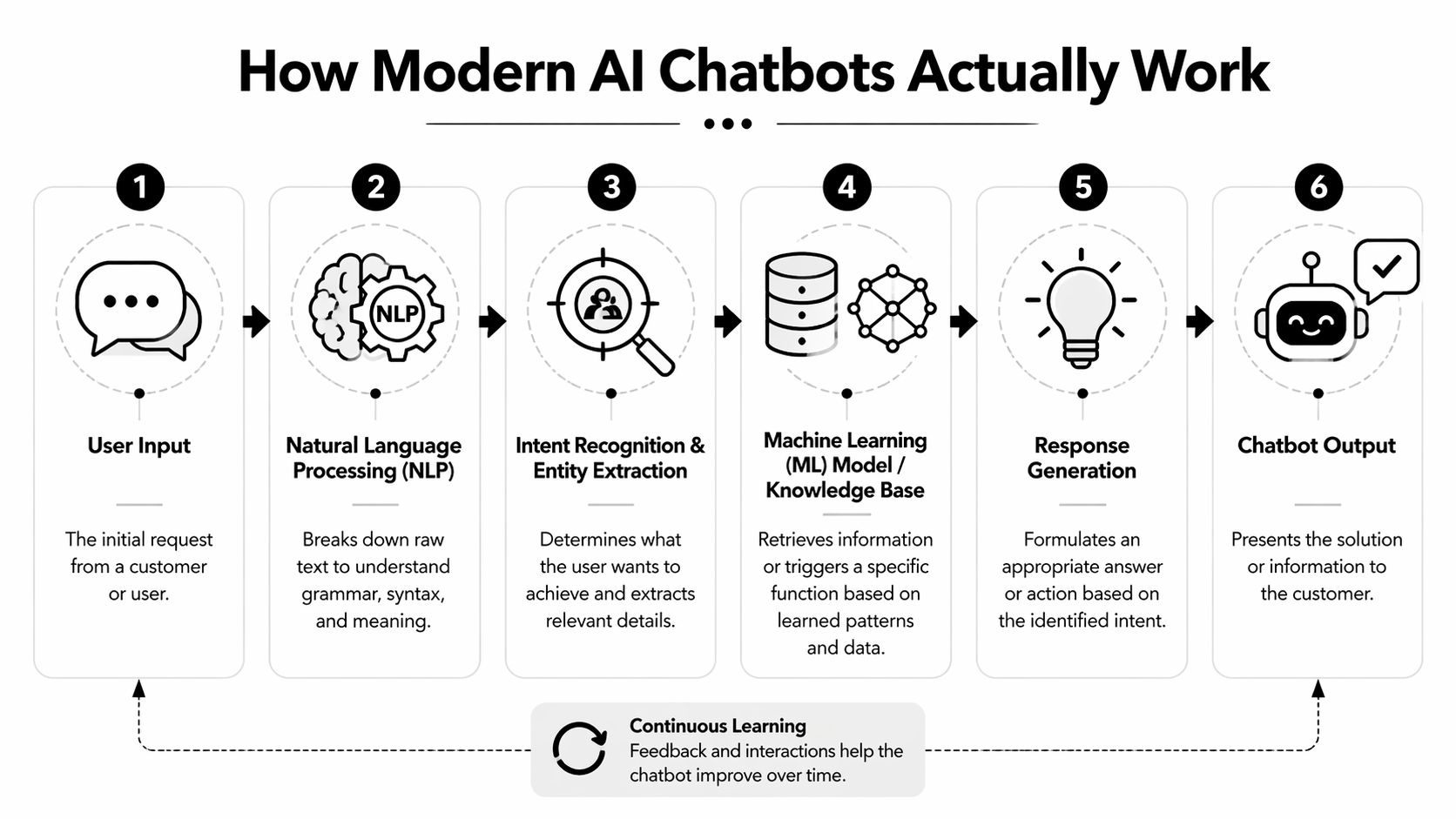

A lot of support teams delay rollout because the system sounds more technical than it is. In practice, the flow is straightforward. A user asks a question. The system interprets the language, checks approved sources, drafts a response, and either sends it or hands the conversation to a human.

According to Kayako’s breakdown of AI chatbot mechanics, Natural Language Processing (NLP) and Machine Learning (ML) have led to a 37% reduction in average response time in customer service environments, and top systems can achieve over 90% accuracy when they use Retrieval-Augmented Generation (RAG) with proprietary sources like FAQs and product documentation.

From messy language to useful intent

NLP is the layer that makes community support possible. Users rarely write neat help-desk questions in Discord or Telegram. They type fragments, slang, abbreviations, typos, and emotionally loaded messages. NLP helps the bot interpret that mess.

A rough sequence looks like this:

- Input arrives: “wallet not linking after mint”

- Intent is identified: account connection or transaction-related setup problem

- Entities are extracted: wallet, mint flow, failed linking step

- Confidence is checked: if confidence is high, the bot answers; if not, it escalates

ML improves performance over time by learning from prior interactions. It doesn’t mean the bot becomes magically wise. It means the system gets better at matching common question patterns to useful answers.

Why the knowledge base matters more than the model

RAG is the part most support leaders should care about. It lets the chatbot pull from your actual support content instead of inventing generic replies. That’s what makes an answer useful in production.

If your team already has a help center, GitBook, website docs, or internal troubleshooting guides, start there. The fastest wins come from structuring those materials so the bot can retrieve precise answers cleanly. A practical place to start is this guide on optimizing your knowledge base for AI bots.

Here’s what a healthy production flow looks like:

- The bot answers directly when the question maps to trusted documentation.

- The bot asks a clarifying question when the user’s message is too vague.

- The bot hands off when confidence is low or the issue requires account review.

- The human agent receives context including the question, retrieved sources, and prior steps.

The biggest implementation mistake isn’t choosing the wrong model. It’s feeding the bot content written for humans who already know where to look.

When teams understand that, setup gets much easier. The project stops being “train the AI” and becomes “organize what we already know so the system can use it.”

Real-World AI Chatbot Use Cases

The best use cases aren’t generic. They match the rhythm of the channel and the type of customer asking for help. That’s why a customer service ai chatbot for a SaaS app won’t be configured the same way as one for a game server or a token community.

That distinction matters because generic bots often fail in informal channels. Community managers in Discord environments report 40-60% escalation rates when bots lose context, according to Dante AI’s analysis of chatbot challenges in customer service. In the same analysis, specialized community setups are associated with ticket load reduction of up to 60%.

SaaS teams

In SaaS, the fastest return usually comes from onboarding and first-line troubleshooting. New users ask the same cluster of questions: how to connect integrations, where to find settings, why an action failed, what a feature does.

A good bot can answer those immediately in Slack communities, embedded web chat, or product-adjacent support channels. It can also route issues based on intent. Setup question. Bug report. Billing confusion. Feature request. That routing alone makes the human queue cleaner.

What doesn’t work is giving the bot broad permission to improvise around technical edge cases. For product behavior, docs-backed answers are reliable. For account-specific debugging, handoff should happen early.

Gaming communities

Gaming support is volatile. Volume spikes around launches, patches, events, and outages. Players also communicate in shorthand. They may reference platform, mode, build, region, or a known issue without spelling any of it out.

That’s where a specialized bot helps. It can answer known bug questions, explain patch changes, point users to server-status resources, and collect structured details before an escalation reaches a moderator.

A short walkthrough can help teams visualize this in practice.

The handoff design matters more here than in almost any other segment. Players won’t tolerate a long loop if the issue blocks play.

Web3 and crypto support

Web3 communities deal with some of the hardest support conditions. Public channels move quickly, users are often global, and questions blend education, trust, and transaction anxiety.

Typical repetitive issues include wallet setup, token claim steps, allowlist checks, bridge confusion, fake link warnings, and launch timing questions. A bot is useful when it gives clear, source-backed answers and avoids any hint of improvising on sensitive topics.

Here’s what tends to work in practice:

- Public answer, private escalation: answer common questions openly, move account-specific cases to a private workflow

- Docs-first replies: pull from canonical sources such as launch docs, policy pages, and security guidance

- Moderator assist: collect the facts before a human joins, so the moderator starts with context instead of a blank thread

The pattern across SaaS, gaming, and Web3 is the same. The bot wins on repeatability. The human wins on ambiguity.

Key Metrics for Measuring Chatbot ROI

If you can’t measure the system, you can’t manage it. Most failed chatbot rollouts don’t fail because the AI is weak. They fail because teams never agree on what “working” means.

The strongest scorecards combine operational efficiency with customer experience. That balance matters because a bot that deflects tickets but frustrates users isn’t a success.

Operational metrics

Start with metrics that show whether the bot is removing pressure from the queue.

- AI resolution rate: This tells you how many conversations the chatbot completes without human involvement. Track it by issue type, not just overall. If resolution is strong for onboarding but weak for billing, that’s useful.

- Ticket volume reduction: Compare human-handled workload before and after rollout. Look at both total volume and category mix. A healthy bot should strip out repetitive work first.

- Average handle time: For escalated conversations, measure whether agents are resolving faster because the bot already captured context and surfaced likely answers.

These metrics show labor efficiency. They also reveal whether your handoff design is helping or creating duplicate effort.

Experience metrics

The next layer is whether customers experience the difference. Sentiment analysis and predictive analytics can improve the overall support journey when the setup is thoughtful. According to Master of Code’s customer service AI statistics, these capabilities can drive an 84% improvement in digital journeys, and 70% of CX leaders see chatbots as key architects of personalized customer experiences.

That doesn’t mean every team should chase personalization first. In community support, reliability beats cleverness. Still, a few experience metrics matter a lot:

MetricWhat it tells youWhat to watch forCSAT trendWhether users felt the interaction was helpfulDrops after bot launch usually signal weak answers or poor handoffsEscalation qualityWhether handed-off tickets arrive with useful contextIf agents keep re-asking basic questions, the bot isn’t doing enoughRepeat contact rateWhether users had to come back for the same issueRepeats often point to unclear answers or outdated docs

Operator note: Track performance by topic, channel, and escalation reason. A blended average can hide serious problems.

Support leaders should review these metrics weekly in the early stage. Not because the bot changes daily, but because the knowledge base, routing rules, and escalation triggers need tuning while usage patterns are still settling.

Best Practices for Implementation

Most chatbot problems are setup problems. Teams blame the model when the actual issue is thin documentation, weak routing, or no plan for human handoff.

A better rollout starts small, stays scoped, and gets operational details right before broad expansion. If you want a practical companion piece, this overview of AI chatbots for customer service pros, cons, and best practices is useful alongside your internal launch checklist.

Build the bot on clean knowledge

Start with your top repetitive issues and the exact answers your team wants users to receive. Don’t dump every document you have into the system and hope retrieval sorts it out.

Prioritize content like this:

- Current FAQs: especially high-frequency questions with stable answers

- Step-by-step help articles: setup, troubleshooting, access, permissions, billing basics

- Policy pages: refund rules, moderation policies, eligibility requirements

- Release notes with support impact: changes that create new tickets right after launch

Short, explicit, well-structured content performs better than long narrative documentation.

Design handoffs before launch

The human experience after escalation matters as much as the bot reply before it. If users have to repeat themselves, the system feels broken even when the automation worked technically.

Set handoff rules around clear triggers:

- low confidence

- sentiment that suggests frustration or urgency

- account-specific requests

- trust and safety concerns

- repeated failure after one or two bot attempts

Never make the user prove they deserve a human. Build that route into the system from day one.

In community channels, handoff design should also control where the conversation goes. Public threads are fine for general guidance. Sensitive issues should move to a private ticket or inbox with context attached.

Train the team, not just the bot

Moderators and agents need to know how to work with the system. That includes reviewing failed queries, spotting content gaps, and deciding which issues should never be bot-led.

A practical implementation rhythm looks like this:

- Launch on a narrow set of repetitive questions.

- Review unresolved conversations every week.

- Fix docs before changing prompts.

- Add new intents only after the current set performs reliably.

- Keep one owner accountable for taxonomy, content quality, and reporting.

This is the difference between a bot that becomes part of support operations and a bot that annoys users until the team turns it off.

How to Choose the Right AI Chatbot Platform

Platform selection gets easier when you judge tools by workflow fit instead of feature count. A polished demo doesn’t matter if the tool can’t manage Discord, Slack, Telegram, and web support without losing context.

For community-first teams, several capabilities are essential. You need native channel coverage, strong knowledge ingestion, usable analytics, and a human collaboration model that doesn’t collapse once volume rises.

Here’s a practical evaluation table.

FeatureWhy It MattersWhat to Look ForNative community integrationsSupport should happen where users already ask for helpDiscord, Telegram, Slack, and web chat support without brittle workaroundsFlexible knowledge base importFaster setup and better answer qualityWebsite, help center, GitBook, Google Docs, and similar sourcesUnified inboxHuman agents need one place to manage escalationsShared views, conversation history, ownership, and status trackingStrong handoff controlsComplex issues must move cleanly to humansConfidence-based escalation, private routing, and context transferAnalytics dashboardYou need proof of value and fast feedback loopsResolution trends, ticket volume trends, response timing, and satisfaction signalsPricing that scales with the teamSupport tools shouldn’t punish collaborationPlans that don’t force seat anxiety every time moderators need access

A side-by-side comparison can help if you’re weighing community-first tooling against traditional support suites. This review of Mava vs Zendesk highlights the trade-offs around channel fit and workflow design.

One option in this category is Mava, which supports shared inbox workflows across Discord, Telegram, Slack, and the web, with knowledge imports from sources like websites, GitBook, and Google Docs. That architecture makes sense for teams that run support in public and private community channels rather than only in a classic help desk.

The deeper question isn’t “which bot has the most AI.” It’s “which platform preserves context when automation stops and human work begins.” That’s the question that determines whether your team scales.

The Future of Support is Collaborative AI

The support model that works now is collaborative. AI handles repetitive questions, instant retrieval, and first-response coverage. Humans handle exceptions, trust, and decisions that need judgment.

That matters even more in Discord, Telegram, Slack, and other community channels where support is fast, informal, and public. A customer service ai chatbot can reduce queue pressure and improve consistency, but only if it’s grounded in real documentation, connected to the right channels, and paired with clean escalation rules.

The teams that get value from AI don’t chase novelty. They build disciplined systems. They decide what the bot should answer, what it should never answer, how a handoff works, and which metrics matter.

That’s why support isn’t becoming less human. It’s becoming more selective about where humans spend their time. When AI takes the repetitive load, agents can focus on the work customers remember: solving hard problems, calming tense situations, and helping people feel taken care of.

If your team supports users in Discord, Telegram, Slack, or on the web, Mava is worth a look. It combines AI answers, a shared inbox, knowledge base imports, and human handoff workflows built for community-driven support.