.png) Get Started

Get StartedCustomer Service Soft Skills: The Complete Training Guide

Technical knowledge gets a support agent through the door. Soft skills are what keeps customers coming back. No matter how thorough your product documentation is or how fast your team responds, a reply that feels cold, dismissive, or robotic can undo everything. Customer service soft skills are the human layer that turns a ticket into a trust-building moment. This guide walks through each core skill, how to train it effectively, and how to measure whether it's actually taking hold in your team.

Why Soft Skills Are the Make-or-Break Layer in Human-Handled Support

Ask any support manager what separates a good agent from a great one, and you'll rarely hear "they knew the product better." The difference almost always comes down to how they communicate. Skills like empathy, tone awareness, active listening, and clear problem framing shape the experience customers remember long after the issue is resolved. According to the Salesforce State of Service report, 80% of customers say the experience a company provides is just as important as its products or services, pointing directly to why communication quality matters more than speed or volume.

This matters even more for teams supporting communities on Discord, Telegram, or Slack. These channels are public, fast-moving, and emotionally charged. A poorly worded reply in a public Discord thread doesn't just affect one user. It plays out in front of hundreds. The interpersonal skills that work in private email support need to be sharper and more deliberate in community environments.

So what are soft skills in customer service, exactly? They're the behaviors and communication habits that influence how customers feel during an interaction, not just whether their problem gets solved. Technical knowledge is learnable from a manual. The relational side requires deliberate, ongoing practice.

Empathy: The Foundation for Handling Angry Customers in Public Channels

Empathy is the most cited and least consistently practiced soft skill in customer service. Agents understand the concept in theory but freeze when a visibly frustrated user posts a complaint mid-conversation in a community server. The natural instinct is to jump straight to troubleshooting. The smarter move is to acknowledge the feeling first.

Goleman's EQ model identifies empathy as one of 12 core competencies across four domains: self-awareness, self-management, social awareness, and relationship management. In support contexts, that means recognizing the emotional signal in a message before formulating a response. When a Web3 user publicly accuses support of "scamming" in a Discord channel, or a gaming user's assets are frozen during peak play, that person isn't just reporting a bug. They're expressing fear and frustration. Skipping acknowledgment makes agents sound transactional. Something like "I can see this has been frustrating, let me help sort this out" costs nothing in time but signals genuine attention, and shows everyone watching that your brand takes concerns seriously.

Empathy reframes the entire interaction. Rather than treating a complaint as a problem to close, it positions the agent as an ally, and that changes how the whole conversation unfolds.

How to Train Empathy Through Discord and Telegram Scenario Drills

Agents learn empathy best by practicing under pressure, not by reading about it. Set up a dedicated training channel in Discord or Telegram where a team lead plays the role of an upset user, posting realistic complaints modeled after actual past tickets. Agents take turns responding in real time, using the same tools and format they'd use in a live interaction. After each exchange, the group reviews the response together: What landed well? Where did the tone fall flat? Did the agent jump to a solution before acknowledging the emotion?

The goal isn't to script empathetic phrases but to build instincts. Teams that run these drills consistently tend to see fewer escalations, because agents get better at reading what a customer actually needs before they respond.

Active Listening: Understanding the Real Problem Before We Respond

Active listening means understanding what the customer is actually trying to communicate, including what they may not have stated directly. A user saying "this keeps happening" in a support thread is signaling recurring frustration, not just a one-time issue. Missing that detail leads to a response that technically addresses the surface problem while completely missing the real concern.

In text-based support, active listening shows up in how agents parse messages before replying. It means reading the full message before drafting anything, noticing emotional language, and asking targeted clarifying questions rather than generic ones. Agents who do this well also tend to generate fewer follow-up tickets, because they solve the issue fully the first time.

Active Listening Techniques for Text-Based, High-Volume Support

High message volume is one of the biggest challenges to active listening in community support. When a team is managing dozens of simultaneous conversations across Discord, Telegram, and web chat, the temptation to skim and respond quickly is real. But that's exactly where quality erodes.

Summarizing the customer's issue back to them before offering a solution confirms the agent understood correctly and signals that the message was genuinely read. Open-ended questions like "Can you walk me through what happened before this error appeared?" often surface details that change the diagnosis entirely. Templates are useful, but they should always be personalized to reflect the specifics of each conversation.

Tone Calibration and De-Escalation When a Conversation Is Already on Fire

Tone calibration means meeting the customer where they are emotionally, then gradually steering the conversation toward a calmer register. This doesn't mean mirroring anger with urgency. It means acknowledging the intensity of the situation while modeling a steady, focused tone that signals both control and care. Language like "let's figure this out together" keeps things solution-focused without minimizing the customer's frustration.

In community support channels, tone mistakes are especially costly. A response that reads as sarcastic or dismissive in a Discord thread gets screenshotted and shared. Strong agents in these environments read the community's cultural tone and match it appropriately while staying professional.

De-Escalation Drills for Emotionally Charged Community Support

De-escalation is a trainable skill, not a personality trait. The LEAPS model (Listen, Empathize, Ask, Paraphrase, Summarize) gives agents a repeatable structure to fall back on when emotions run high, which is especially useful in fast-moving Telegram group floods during a product outage.

Structured de-escalation drills work similarly to empathy drills but focus specifically on tone recovery. A trainer writes a message at peak frustration, perhaps a user threatening to leave publicly in a Telegram group, and agents practice responding in a way that acknowledges the emotion without amplifying it. First drafts often reveal where default instincts take over: agents get overly defensive, or swing into over-apologizing without committing to action. Feedback sessions after each drill build collective vocabulary around what good de-escalation actually looks like in your specific community context.

Problem-Solving Under Pressure: Turning Frustration Into Resolution

Strong problem-solving under pressure is about structured thinking, not just quick thinking. Agents who jump to a solution too fast often guess wrong, which adds frustration rather than reducing it. A useful framework is separating diagnosis from resolution. Before proposing any fix, an agent should be able to state clearly what the actual problem is. That one-step pause improves accuracy and gives the customer confidence that the agent has genuinely engaged.

Ownership matters here too. When an agent takes visible ownership of a problem, even one they can't fully resolve independently, trust holds firm. Saying "I'm going to stay on this with you until we have a resolution" is a commitment customers remember.

How to Build a Continuous Soft Skills Training Practice for Your Support Team

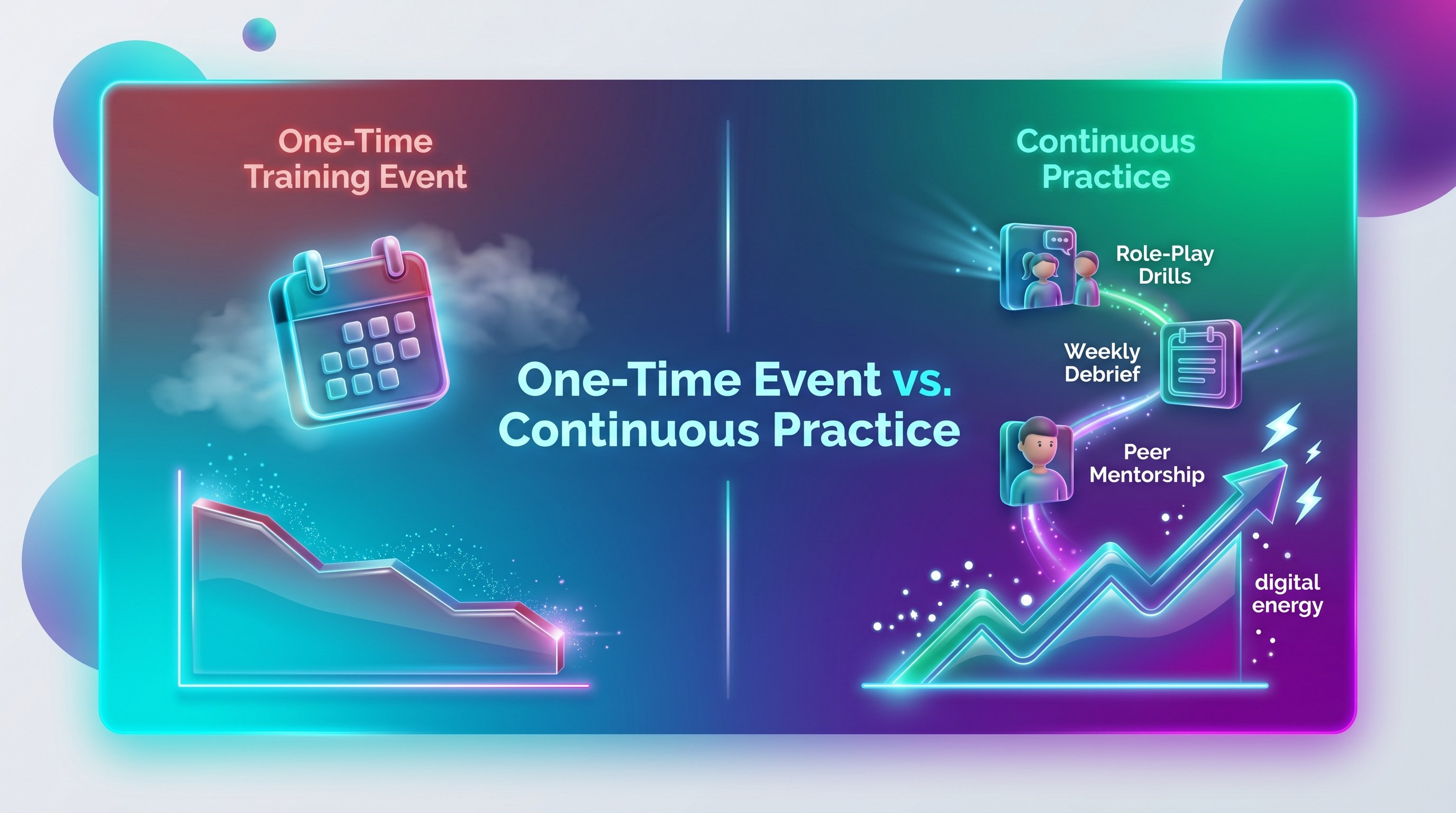

Soft skills training doesn't stick when it's treated as a one-time event. Building a continuous practice means embedding development into the regular rhythm of your team's week. Role-playing drills for scenario practice, weekly debriefs where real tickets are reviewed as learning examples, and mentorship pairings where senior agents work alongside newer ones all reinforce different aspects of development.

One of the most overlooked elements of ongoing training is agent emotional fatigue. Teams managing large Discord or Telegram communities face a steady stream of high-emotion, high-stakes interactions. When human agents are handling only the tickets that AI couldn't resolve, those conversations are almost always the hardest ones. That concentrated emotional load compounds over time and affects every soft skill across the board: empathy gets thinner, tone calibration slips, patience shortens. Acknowledging this openly, and building in structured recovery time, peer debrief moments, and rotation practices, isn't optional. It's what keeps soft skill quality consistent at scale.

Mava's AI support handles up to 50 percent of repetitive community queries, which meaningfully reduces the volume burden on human agents. That capacity shift matters because it lets your agents focus their soft skills where they have the most impact: the complex, emotionally loaded escalations where human judgment is irreplaceable.

Peer feedback is also underutilized. Agents are often best positioned to recognize growth and gaps in each other's work because they're doing the same job. Creating structured space for peer review, whether through ticket audits or group debriefs, builds a culture where development is shared rather than purely top-down.

Measuring Soft Skill Development: What to Track Beyond CSAT Scores

CSAT is a useful starting point, but it doesn't tell the full story. A customer might rate an interaction positively simply because their issue was resolved, even if the communication was clunky. A low score doesn't always mean soft skills were the problem. Pairing CSAT with NPS (Net Promoter Score, a measure of how likely a customer is to recommend your brand) gives a more complete picture of whether soft skill quality is actually building long-term trust.

Qualitative review of actual conversations provides the most direct insight. QA reviewers using your shared inbox can apply a consistent rubric across ticket samples to surface patterns that scores alone never would. Here are five behavioral indicators worth tracking in every chat or ticket review:

- Did the agent acknowledge frustration before offering a solution?

- Did the agent summarize the customer's issue before responding?

- Did the agent's tone stay steady and solution-focused throughout?

- Did the agent state the problem clearly before proposing a fix?

- Did the agent take visible ownership rather than deflecting?

When measurement is specific, agents know what they're working toward. When it's vague or purely score-based, development stalls. The teams that improve fastest treat soft skill measurement as an ongoing conversation, not a quarterly report.

Customer service soft skills training isn't a nice-to-have for support teams operating in community-driven environments. It's the core of what makes human-handled support worth the investment. AI can handle volume. What your agents need to deliver is something tools alone can't replicate: the kind of interaction that makes a frustrated user feel genuinely valued.