.png) Get Started

Get Started

Anti Nuke Bot Discord: Protect Your Server Now

You’re probably reading this because your Discord server has grown past the point where “we’ll notice if something weird happens” is a real security plan. Maybe you run a game community, a SaaS support server, or a Web3 project where one bad moderator action can wipe months of work in minutes. That’s the moment when an anti nuke bot discord setup stops being optional and starts being basic operational hygiene.

Most guides treat anti-nuke protection like a shopping list. Add a bot, toggle a few modules, move on. That mindset gets servers hurt. Good protection isn’t just about installing Wick Bot, Security Bot, or another tool with a dashboard. It’s about deciding who gets trusted, what actions should trigger intervention, and how much friction your team can tolerate before the bot starts slowing down legitimate work.

The hard part is that anti-nuke security always involves trade-offs. Tight thresholds catch attacks faster, but they can also punish a rushed moderator during an event. Broad whitelists reduce false positives, but they create blind spots that attackers love. If you understand those trade-offs before you configure anything, you’ll make better decisions and recover faster when something still goes wrong.

Table of Contents

- The Community Manager's Nightmare Scenario

- Understanding Server Nuking and Attack Patterns

- Core Features of an Effective Anti-Nuke Bot

- Comparing Popular Anti-Nuke Bots in 2026

- Step-by-Step Setup and Configuration Guide

- Incident Response and Troubleshooting

- Beyond the Bot Best Practices for Server Security

The Community Manager's Nightmare Scenario

You wake up, check Discord, and the server looks unrecognizable. Categories are gone. Channels are gone. Roles have been renamed, stripped, or deleted. Members are panicking in DMs because they’ve been banned or can’t get back in. Someone with privileged access got compromised, turned malicious, or invited the wrong bot.

This is what a nuke feels like in practice. It isn’t just “spam” or a rough moderation incident. It’s a rapid destruction event where the attacker uses permissions you already granted to tear the place apart faster than human moderators can react.

What the damage looks like

A real nuke usually comes in layers, not as a single action. The attacker may start by deleting channels, then banning members, then stripping roles so nobody else can respond cleanly. In some servers, they also spam webhooks to flood every remaining channel with noise and fake announcements.

The operational problem is bigger than the cleanup. Once trust breaks, your team loses time to triage, member reassurance, and internal blame. Even if you rebuild structure, people remember that your server looked defenseless.

A server rarely gets destroyed because nobody cared. It gets destroyed because the team assumed trusted permissions would stay trusted forever.

Why an anti-nuke bot changes the outcome

An anti-nuke bot exists for one reason. It reacts faster than your staff can. If someone suddenly starts banning users, deleting channels, or making dangerous permission changes, the bot can quarantine, kick, ban, or strip access before the attacker finishes the job.

That’s the difference between “we had an incident” and “our community got erased.”

Tools like Wick Bot and Security Bot are useful because they don’t wait for a moderator to notice a disaster in progress. They watch for destructive behavior continuously. If you run a serious Discord community, that’s the baseline.

Understanding Server Nuking and Attack Patterns

A nuke is the abuse of high-impact permissions to disrupt or destroy a server. The attacker doesn’t need a magical exploit. Usually they just need the keys you already handed out, directly or indirectly.

A compromised admin is the simplest example. If someone controls an account with dangerous permissions, they can behave like an insider. The same is true for a malicious or compromised bot with privileged role placement. In security terms, this is less about fancy hacking and more about permission abuse at speed.

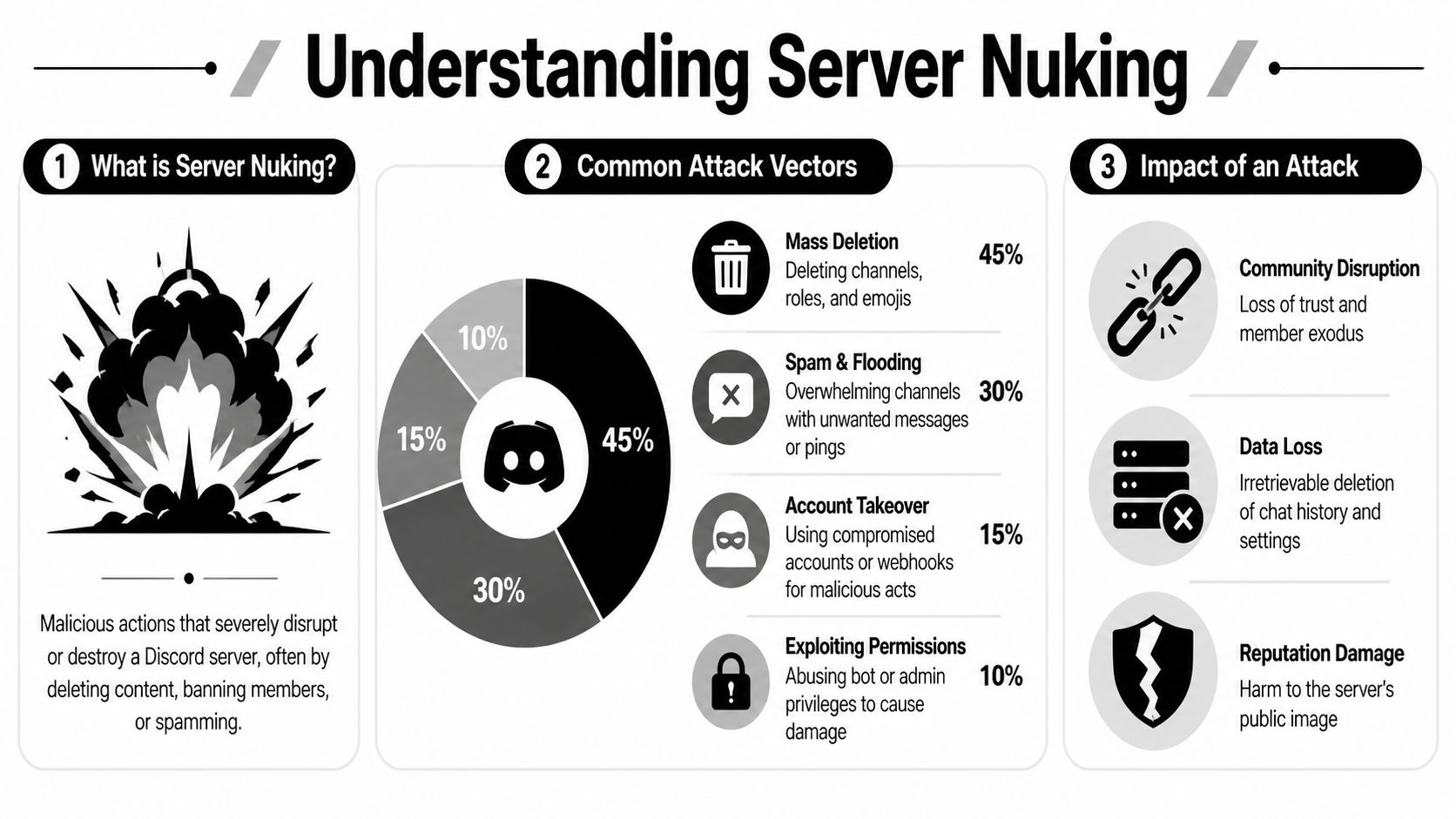

A visual summary helps:

The attack paths that matter most

Some attack patterns show up far more often than others. Webhook spamming accounts for 92% of incidents, mass channel deletion for 85%, mass member banning for 78%, and role stripping for 65%, according to Discord transparency data analyzed in industry reporting.

Those numbers matter because they tell you where to focus first:

- Webhook abuse: Attackers create or hijack webhooks and flood channels at machine speed.

- Channel deletion: They erase structure, knowledge, support history, and onboarding paths.

- Mass bans: They remove your members faster than staff can reverse the damage.

- Role stripping: They cripple your response team by removing permissions or trusted roles.

If your anti-nuke bot isn’t tightly configured around those behaviors, you’re protecting the wrong things.

Why these attacks succeed

The usual root causes are operational, not technical:

- Over-trusted staff roles: Too many people can ban, delete, or edit roles.

- Bad bot hygiene: A utility bot gets admin when it only needed one or two permissions.

- Weak join controls: Raid accounts enter too easily and create noise while the actual attack unfolds.

- No hierarchy planning: The anti-nuke bot sits too low in the role stack to punish a rogue admin or bot.

Practical rule: If your protection bot cannot act above the account causing the damage, you don’t have protection. You have logging.

This walkthrough shows the mechanics in a more visual way:

What a new manager should take from this

Think of dangerous permissions like master keys. The more keys you issue, the more likely one gets lost, copied, or abused. Anti-nuke protection works best when you combine detection with restraint. Fewer dangerous permissions. Better role placement. Clear thresholds for high-risk actions.

That’s why the right anti nuke bot discord setup isn’t just a bot install. It’s a decision about how much destructive power any person or bot should have before the system intervenes.

Core Features of an Effective Anti-Nuke Bot

An effective anti-nuke bot is a control system for bad moments. The test is simple. Can it spot destructive behavior fast, identify who caused it, and stop that account before staff lose control of the server?

That standard filters out a lot of feature-list noise.

Rate limits and action windows

Good anti-nuke protection starts with thresholds tied to real moderator behavior. One channel deletion can be normal. Ten in a short burst is rarely normal. The same goes for bans, role edits, webhook creation, and permission changes.

The hard part is choosing limits that catch abuse without blocking legitimate work. Set them too loose, and a compromised admin gets time to do real damage. Set them too tight, and your own staff trip the system during cleanup, raid response, or server maintenance.

Use different thresholds for different actions because the risk is different.

- Channel deletions: Keep these low. Legitimate bulk deletion is uncommon, and attackers use channel wipes early to create panic.

- Bans and kicks: Allow slightly more room if your server handles raids often. A moderation team may need to remove many accounts quickly.

- Role edits and permission changes: Keep these very tight. Attackers often change roles first so nobody can stop them.

- Webhook and bot-related actions: Watch these closely. Attackers use webhooks and rogue bots to keep posting even after staff accounts are removed.

A bot that treats every action the same usually creates one of two failures. It either misses the dangerous actions, or it punishes normal moderation.

Event monitoring and attribution

Detection only matters if attribution is accurate. If the bot sees that a channel disappeared but cannot reliably connect that action to the right user or bot, the response becomes guesswork.

That is where weaker setups fail in practice.

A false positive against a real moderator causes confusion at the worst possible time. A missed attribution lets the attacker keep working while staff argue in logs. The operational goal is clear. The bot should tie destructive events to the actor fast enough that automated enforcement is safe to trust.

This is why audit-log aware detection matters more than flashy reporting. A clean dashboard is nice. Correct attribution is what prevents a bad minute from turning into a rebuild.

Whitelisting is necessary and dangerous

Whitelisting is where many communities often erode their own protection. Every exemption reduces friction for trusted people and systems. Every exemption also creates a path around your controls.

Treat whitelist entries like privileged access, not convenience settings.

Risky whitelist habitWhat happens nextWhitelisting broad staff rolesOne compromised moderator account can bypass protection and act uncheckedWhitelisting utility bots by defaultA leaked bot token becomes a server-wide incidentLeaving old exceptions in placeNobody remembers the reason, but the access remains

The safer approach is narrow trust with a written reason for each exception. If a bot needs protection bypass for one workflow, grant that bypass for that workflow. If a senior moderator never needs to mass-delete channels, do not whitelist them for channel deletion events just because they are trusted.

I tell new managers to review the whitelist the same way they would review admin keys. If an exempt account turns hostile, the anti-nuke bot will respond late or not at all.

Automatic punishments and quarantine

A useful anti-nuke bot needs immediate enforcement options. Common responses include banning, kicking, stripping roles, muting, or quarantining the account so staff can inspect what happened before restoring access.

Each response has a trade-off. Banning is fast and final, but it can slow investigation if you need the account in place to review state changes. Quarantine is safer for false positives, but it may leave enough access for a determined attacker if your quarantine role is poorly designed. Role stripping is effective, but only if the bot sits high enough in the hierarchy to remove the roles that matter.

Choose punishments based on what failure hurts more in your server. In a large public community under raid pressure, speed usually matters most. In a private staff-heavy server, avoiding false positives may matter more because legitimate bulk actions happen more often.

Permission hierarchy decides whether the bot can actually stop anything

Role hierarchy is not a detail. It decides whether enforcement works.

A bot can detect every destructive action perfectly and still fail if it sits below the offending role. In that setup, alerts may fire, logs may fill up, and nothing gets contained. New managers often mistake that for partial protection. It is not protection. It is observation.

Test this before you trust it. Use a staging server or a safe test role. Trigger controlled actions, confirm the bot attributes them correctly, and confirm it can carry out the configured punishment against the target role. Until that test passes, anti-nuke is still a draft, not a defense.

Comparing Popular Anti-Nuke Bots in 2026

Most servers choosing an anti nuke bot discord setup end up comparing the same names. Wick Bot is the best-known specialist. Security Bot is attractive because it combines anti-nuke with broader security tooling. Other options exist, but many communities still choose between those two first, then add another layer later if needed.

The problem is that feature comparisons are easier to find than trustworthy performance data.

What the comparison data can and cannot tell you

Performance metrics and real-world effectiveness data are largely absent from anti-nuke bot discussions. One anecdotal test showed AuthGG stopped an attack after 0 channel deletions, while Security Bot allowed 6 and Wick allowed 3, but the test lacked standardized methodology, as noted in this comparison video summary.

That doesn’t mean the test is useless. It means you shouldn’t treat it like a benchmark. Bot latency, server size, role hierarchy, threshold settings, and attack style all change the result. A bot that looks slower in one public test might outperform another in your actual environment if your configuration is tighter.

2026 Anti-Nuke Bot Comparison

BotKey ProtectionsWhitelisting GranularityIdeal Use CasePricingWick BotStrong anti-nuke focus, quarantine-style responses, logging optionsTypically suited to careful trust segmentationLarge communities, Web3 projects, teams that want a specialistFree core features with premium loggingSecurity BotAnti-nuke plus automod, logs, and broader security toolingGood fit for teams that want role and action-based controlCommunities that want one broader security botCore anti-nuke available in broader toolkitSerax ShieldConsidered in bot comparisons and live tests by some communitiesVaries by implementation and setup styleTeams evaluating alternatives beyond the two leadersVariesAnti Nuke Bot and similar niche optionsSimpler anti-nuke, anti-raid, and anti-spam positioningOften simpler, sometimes less nuancedSmaller communities that want straightforward setupVaries

How to choose based on operations, not hype

If your server is large, politically sensitive, or exposed to targeted raids, a specialist like Wick Bot is often easier to justify. Teams choose it when they care most about aggressive anti-nuke posture and are willing to spend more time on hierarchy and whitelist discipline.

Security Bot fits teams that want one tool to cover more ground. That can reduce dashboard sprawl and simplify staff training. The trade-off is that all-in-one tools can tempt teams into enabling many systems at once before they understand how those systems interact.

Don’t choose by popularity alone. Choose by whether your staff can operate the bot correctly at 2 a.m. during a real incident.

The practical decision rule

Shortlist two bots. Install each in a staging server. Simulate moderator actions you typically perform, such as deleting test channels, changing role permissions, and banning test accounts. Then answer three questions:

- Does the bot attribute actions correctly?

- Can your team understand the logs under pressure?

- Does the bot stop risky behavior without constantly punishing normal work?

If one tool wins those tests, that’s your answer. If both are acceptable, prefer the one your team will maintain consistently.

Step-by-Step Setup and Configuration Guide

A usable anti-nuke setup does one job first. It prevents irreversible damage when a staff account, bot token, or high-permission role is abused. Everything else comes after that.

Step 1 install the bot with the right hierarchy

Invite the bot with only the permissions it needs to detect, log, and respond to destructive actions. Then place its role above any moderator role, automation bot, or custom admin role it may need to stop.

This is the first place new community managers get a false sense of security. The bot appears online, the dashboard looks configured, and nothing happens when a privileged account goes rogue because the bot sits too low in the role stack.

Test hierarchy on day one. Use a safe staff test role and confirm the bot can act against it under controlled conditions. If it cannot touch the accounts that could damage your server, the rest of your setup is paperwork.

Step 2 enable the modules that stop fast, high-impact damage

Start with the actions that can wreck a server in minutes:

- Unauthorized bot adds

- Channel deletion

- Role deletion

- Role permission changes

- Mass bans or kicks

- Webhook creation or abuse, if your bot supports it

The exact command syntax depends on the bot. The operating model stays the same. Pick the action, set a threshold, choose the response, then test it.

Do not enable every protection category at once. That creates noisy logs, more false positives, and confused staff. Early on, you want clean signals around the actions that are hardest to undo.

Step 3 whitelist narrowly and distrust role-based exemptions

Whitelists are where good anti-nuke setups usually fail. Teams add a whole moderator role, then a senior helper role, then a utility bot role, and eventually half the server can bypass protection.

Keep exemptions tight:

- Owner or founder accounts: Give the smallest exemption that still lets them work. Full bypass should be rare.

- Moderator roles: Usually better handled with realistic thresholds than full trust.

- Administrative bots: Exempt only bots with a known task that would otherwise trigger protection.

User-based whitelisting is safer than role-based whitelisting because roles spread. Someone gets promoted for a weekend event, keeps the role longer than planned, and now they also keep the bypass.

A simple rule helps here. If a person or bot does not need to delete channels, edit permissions, add bots, or mass-ban members as part of normal work, do not exempt them from anti-nuke enforcement.

Step 4 set thresholds around real staff behavior

Thresholds should fit your operation, not somebody else’s screenshot.

A support community may see frequent moderation bursts during spam waves. A private gaming clan may go days without any major admin action. If both servers use the same limits, one gets constant false positives and the other stays too loose to stop a real attack.

Review a normal week of moderator activity before you finalize anything. Look at how often staff delete channels, edit roles, ban users, or change permissions during legitimate work. Then set the bot slightly above that baseline for common tasks and much tighter for rare, destructive actions.

The goal is clarity. Malicious behavior should stand out fast enough that the bot can stop it before staff start arguing in chat about whether the action was intentional.

Step 5 add friction where abuse is expensive

Join screening, minimum account age, CAPTCHA, and approval queues all slow people down. They also slow raids down.

That trade-off is real. Extra friction can hurt onboarding, event turnout, or volunteer recruitment. It can also save hours of cleanup and protect your staff from getting buried in junk reports, fake appeals, and scam attempts. If your team handles member issues through Discord, tighten that workflow too. This guide on stopping spam and scam customer support tickets in Discord covers the support side of the same problem.

Use more friction in the places attackers abuse most. New account joins, first-message privileges, ticket creation, and permission-sensitive channels usually deserve stricter controls than general chat.

Step 6 test before you trust the setup

Do not assume a green dashboard means protection works. Run controlled tests in a staging server or with a temporary admin role in production.

Simulate actions such as:

- Deleting a test channel

- Editing role permissions

- Attempting repeated bans

- Adding an unauthorized bot

- Creating suspicious webhooks

Then answer three operational questions:

- Did the bot attribute the action to the correct account?

- Did it apply the response you intended?

- Can your moderators understand the log entry under pressure?

A bot that blocks attacks but produces unreadable logs still creates incident risk. During a real compromise, staff need enough detail to act fast without guessing who did what.

One final point matters more than any feature toggle. Re-test after staff changes, role changes, bot changes, or major server restructures. Anti-nuke protection drifts out of date imperceptibly, and quiet failures are the ones that usually hurt the most.

Incident Response and Troubleshooting

Even well-run servers still need an incident playbook. During an active attack, confusion is the enemy. Staff start talking over each other, someone revokes the wrong permission, and the actual compromised account keeps moving.

The answer is a short sequence. Lock down first. Investigate second. Restore third.

During the attack

When destructive activity starts, move fast and keep the scope narrow.

- Freeze further damage. Activate lockdown tools if your bot supports them. Remove dangerous permissions from suspect roles if needed.

- Identify the actor. Check anti-nuke logs and Discord audit logs together. Don’t rely on chat screenshots.

- Neutralize access. Ban, kick, quarantine, or strip permissions from the compromised account or malicious bot.

- Stop reinfection. Revoke suspicious bot access, rotate staff trust decisions, and review recent invites.

A common mistake is rebuilding channels before the threat is contained. Don’t do cleanup while the attacker still has a path back in.

After containment

Once the server is stable, focus on recovery and root cause:

- Audit role hierarchy: Find out why the attacker had the power they used.

- Review whitelists: Remove any trust entries that were too broad.

- Reconstruct with intention: Don’t recreate the same fragile permission model.

- Document the incident: Save what happened, who acted, and what settings failed.

For teams that want stronger internal account discipline after an incident, this guide on using cold admin accounts for Discord security is worth reviewing. Separating day-to-day moderation from high-privilege administrative access reduces the chance that one compromised account can do everything.

Fixing false positives without gutting protection

False positives happen when your bot sees a legitimate burst of admin activity and treats it like a nuke. The worst response is to disable modules wholesale. That usually creates a bigger hole than the one you were trying to fix.

Use a narrower process:

ProblemBetter fixA moderator got punished during a planned cleanupRaise that specific action threshold slightly or schedule the task under a controlled exempt roleA trusted bot triggered anti-nukeWhitelist only the exact bot and only if its job requires itLogs were too noisy to interpretReduce unnecessary modules and keep the high-risk detections clear

Good anti-nuke tuning doesn’t aim for zero false positives. It aims for manageable friction with strong protection against catastrophic actions.

Beyond the Bot Best Practices for Server Security

An anti-nuke bot is a safety layer, not a substitute for good operations. The strongest servers treat security as a habit. They enforce 2FA for staff, review audit logs regularly, keep role hierarchies clean, and challenge every request for higher permissions.

They also vet new bots like they would a new contractor. What permissions does this bot need? Who maintains it? What happens if that token is compromised? Those questions prevent a lot more damage than another dashboard toggle.

Support operations matter here too. AI support platforms like Mava can reduce repetitive support tickets by up to 60%, which can free moderator time for security and community health, according to usage context summarized on TopStats bot ecosystem coverage. Less staff time spent on repetitive queue work means more attention available for permission review, incident drills, and suspicious behavior.

If your role structure is messy, fix that before your next scare. This breakdown of Discord permissions by categories, channels, and roles is a useful reference when you’re simplifying access.

Mava helps community teams handle support at scale without losing control of the conversation. If your moderators are drowning in repetitive Discord tickets while also trying to keep the server secure, Mava gives you a shared inbox, AI agents, and cross-channel workflows so humans can focus on the high-risk issues that require judgment.