.png) Get Started

Get Started

Web3 Community Management: Discord & Telegram Best Practices

Running a Web3 community isn't like managing a brand's Facebook page or a SaaS help forum. The stakes are different, the members are different, and the threats are different. Token holders can become vocal critics overnight. A single unaddressed scam can unravel months of trust-building. And your community never sleeps, because it spans every time zone on Earth. That's the real terrain of Web3 community management, and it requires a completely different approach than anything traditional community playbooks prescribe.

TL;DR:

- Web3 communities are ecosystems of financial stakeholders, not passive audiences. Token holders scrutinize everything, and silence triggers FUD and sell-offs.

- Scam bots are relentless. 65% of crypto incidents in 2025 involved social engineering. Without tight moderation infrastructure, a single attack can destroy months of trust.

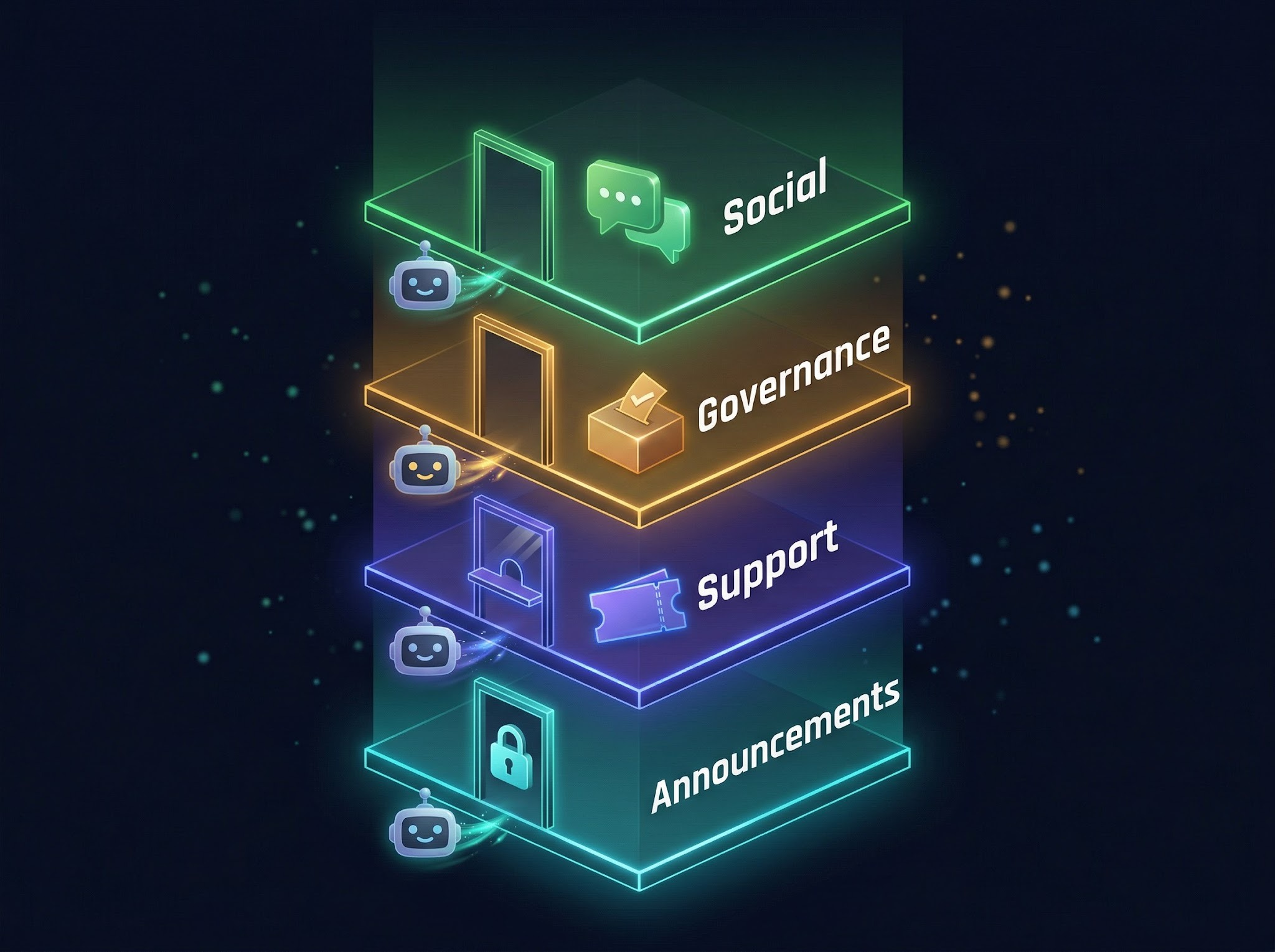

- On Discord, separate channels by purpose (announcements, support, governance, social), gate access with Collab.Land, and use ticket bots to keep support structured and trackable.

- On Telegram, implement verification flows via Rose/MissRose, restrict who can add members, and use pinned messages to keep critical info findable in fast-moving groups.

- When security incidents happen, communicate immediately. Confirm what happened, clarify what your team will never do, and point to one verified source of truth.

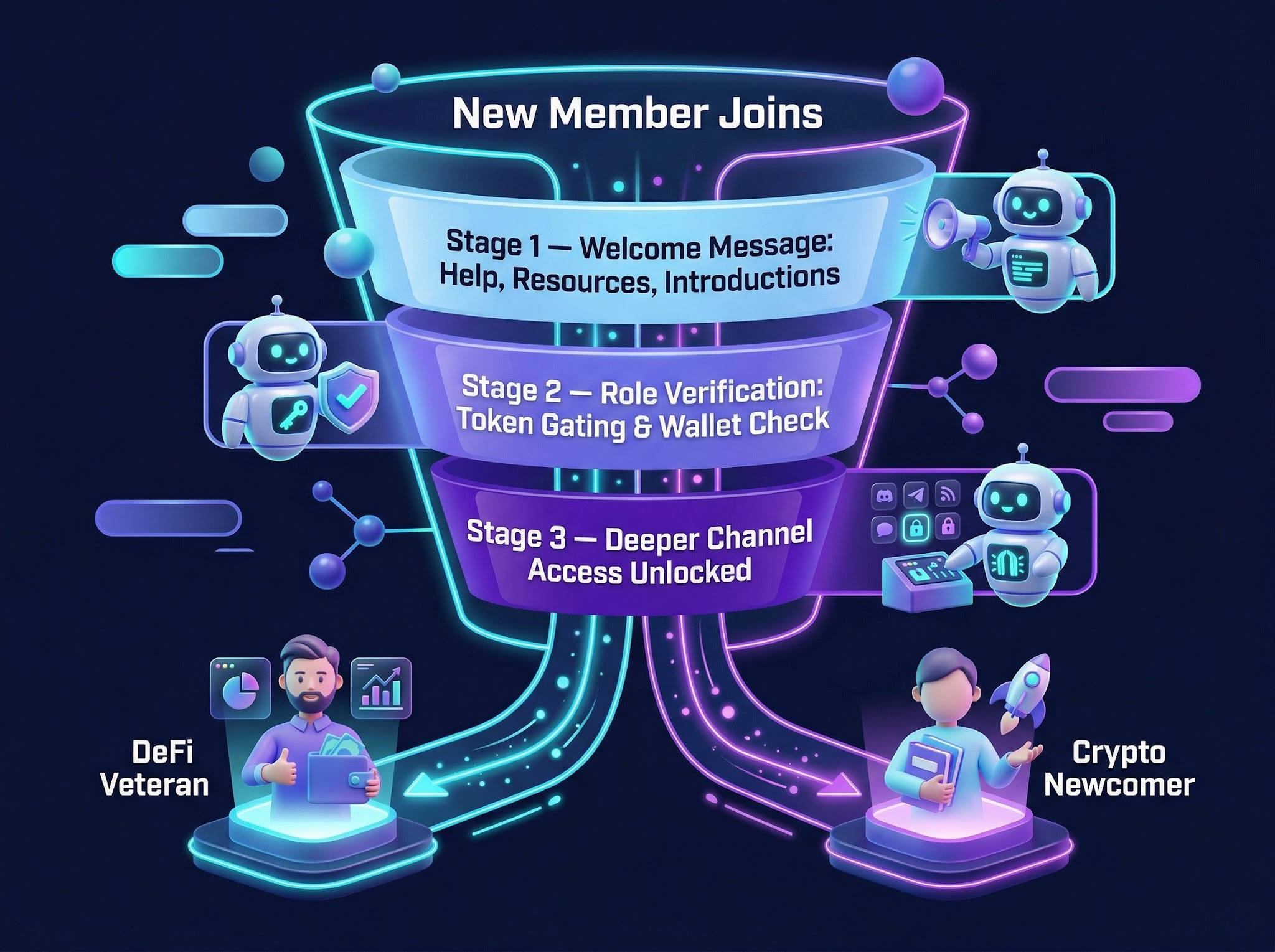

- Onboarding should be layered. Welcome messages point to help, resources, and introductions. Role-gating unlocks deeper access as members progress.

- Skip vanity metrics. Track message activity relative to member count, ticket resolution time, 30/90-day retention, and governance participation rates.

- Moderator burnout is a systems problem, not a staffing problem. Automate routine work so human mods can focus on relationships and judgment calls that bots can't handle.

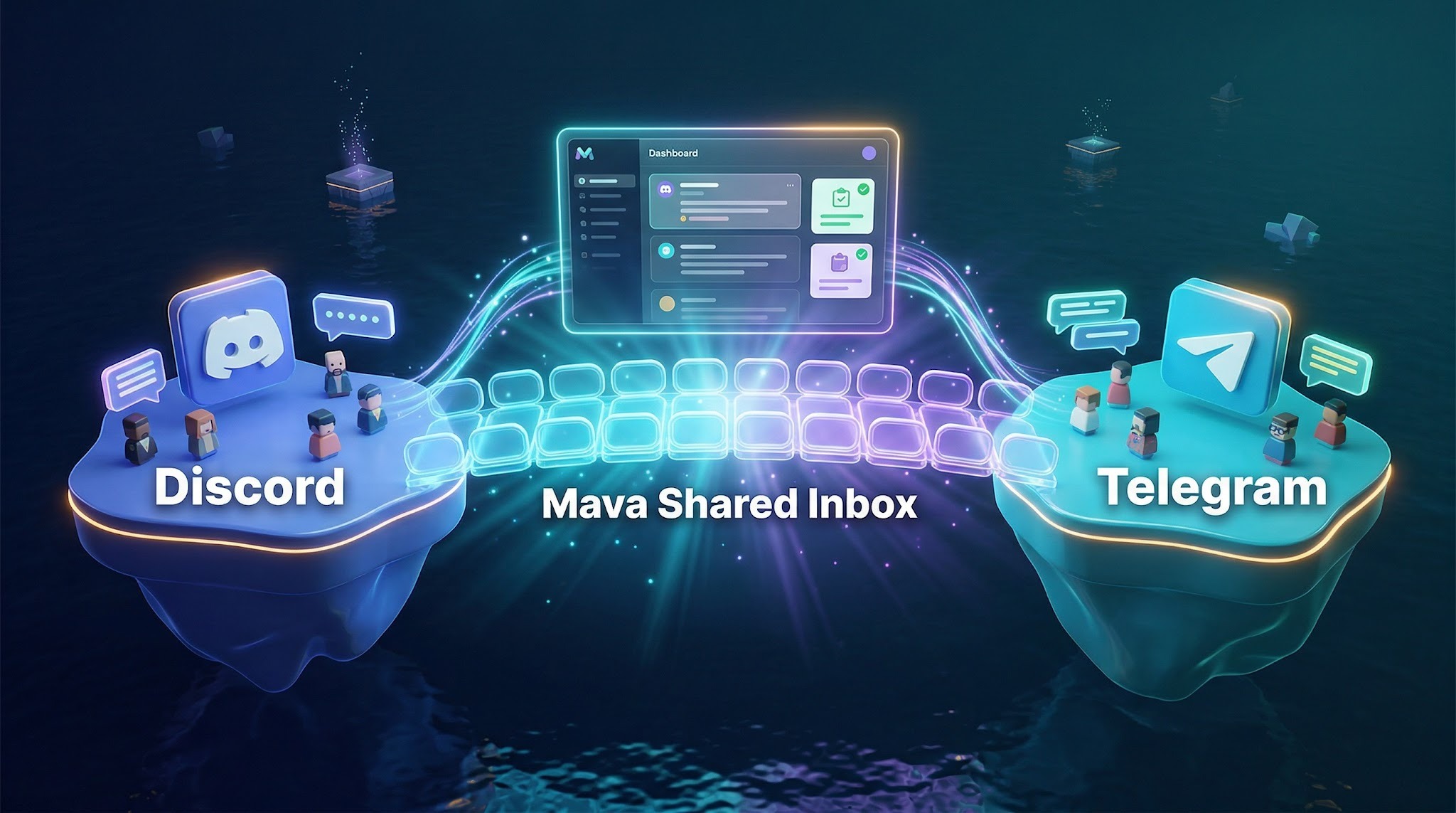

- Unified tooling that connects Discord and Telegram into one support view is what separates teams that scale from teams that burn out.

Why Web3 Community Management Demands a Different Playbook

Most community management frameworks were built for audiences. Web3 communities aren't audiences. They're ecosystems of people who have real financial and social stakes in your project's success. Someone who holds your token isn't just a fan. They're a partial owner, a potential voter in governance decisions, and someone with every reason to scrutinize how your team communicates.

This changes everything about how you structure, moderate, and measure your community. What works for a gaming community or a SaaS user group falls flat in a Web3 context, where decentralization isn't just a technical property but a core cultural expectation.

The Core Pain Points: Scam Bots, Mod Burnout, and 24/7 Global Pressure

The most immediate pressure any Web3 community manager feels is security. Scam bots are relentless. The moment a project gains traction, fake admins flood member DMs, phishing links circulate in public channels, and impersonator accounts start sowing confusion. According to AMLBot's analysis of 2,500+ investigations, 65% of crypto incidents in 2025 were driven by social engineering, with phishing ranking as the second most common attack vector by case volume (18% of all cases), behind investment scams. Without tight moderation infrastructure, a single botted invasion can shake member confidence in ways that take weeks to repair.

Moderators carry the weight of keeping this chaos at bay, often without adequate Discord customer support tooling. When a community operates across multiple time zones and platforms simultaneously, no single moderator team can sustainably cover all of it. Burnout is a direct product of under-resourced moderation, and burned-out mods make mistakes: they miss spam, respond poorly under pressure, or simply go quiet, leaving the community rudderless at exactly the wrong moment.

The solution isn't just hiring more mods. It's building systems that absorb routine work automatically, so human moderators can focus on the nuanced, relationship-driven aspects of community health that bots genuinely can't handle.

Members as Stakeholders: Why Transparency Is Non-Negotiable

In traditional communities, a delayed response or vague update is mildly annoying. In Web3, it's fuel for speculation and FUD. When members hold tokens, they interpret silence as a signal. Lack of transparency doesn't just frustrate people; it can trigger sell-offs, forum outrage, and very public reputational damage.

Effective crypto community management treats every significant decision, delay, or change as a communication opportunity. Members who understand your reasoning are far more likely to stay engaged than those left guessing. Accountability in your public channels, honest governance updates, and responsive leadership transform token holders from spectators into invested contributors.

Building a Discord Structure That Actually Scales

Discord is, for many projects, the central hub of Web3 community life. Its server structure, role permissions, and bot ecosystem make it uniquely suited to managing large, diverse communities, but only if you build it intentionally.

Channel Architecture: Separating Announcements, Support, Governance, and Social

A scalable Discord structure separates channels by purpose and expected behavior. Announcement channels should be read-only for members, tightly controlled, and reserved exclusively for verified project updates. Mixing announcements with general chat is one of the most common mistakes in early-stage Web3 servers: critical information gets buried within hours, and members stop checking because the signal-to-noise ratio is too low.

Support channels deserve their own dedicated space with clear guidelines. Governance channels host proposal discussions, Snapshot voting rationale, and contributor conversations. Social channels give the community room to build the informal relationships that hold everything together over time. When these functions are cleanly separated, members know exactly where to go.

Bots, Role Hierarchies, and Keeping Support Manageable

The right bot stack makes the difference between a server that scales and one that collapses under its own weight.

- Collab.Land handles token-gating and wallet verification, assigning roles based on on-chain holdings so only genuine token holders or NFT owners access exclusive channels.

- Captcha.bot filters out automated spam accounts at the entry point.

- For ongoing moderation, MEE6 and Carl-bot handle auto-moderation, anti-spam filtering, and role management, while Dyno adds logging and reaction roles suited to growth-focused servers.

- Most established Web3 projects run MEE6 or Carl-bot alongside Collab.Land as a baseline, then layer in additional tools based on server size.

Role hierarchies give members a visible path for increasing involvement and help moderators triage requests by directing people to the right person. A contributor asking a governance question should have a different support path than a brand-new member asking where to buy tokens.

Ticket bots are non-negotiable at any meaningful scale. When support requests flow through open channels, context gets lost and members repeat the same questions because previous answers are impossible to find. Ticket bots convert support requests into structured, trackable conversations with clear ownership. Private threads further protect sensitive discussions from cluttering public spaces.

Structuring Telegram for Web3 Support and Engagement

Telegram remains dominant in crypto, particularly in regions where it's the primary communication tool for financial and tech communities. Its group architecture is simpler than Discord's, which makes it both easier to start and harder to scale without deliberate planning. We've seen projects supporting entire communities through Telegram support alone, which makes the structure decisions even more consequential.

Anti-Spam Protocols, Bot Configuration, and Verification Flows

Telegram groups without proper anti-spam configuration become unusable fast. The first line of defense is a verification flow at entry. New members should complete a CAPTCHA or question-based challenge via Rose or MissRose before they can post. Configuring these bots to auto-delete messages containing external links from unverified users, or known phishing patterns, gives your mod team a meaningful head start. Restricting who can add members and enabling admin approval for new joins prevents most bot invasions before they start.

Pinned Messages, Topic Threads, and Keeping Information Findable

In a fast-moving Telegram group, important information disappears within hours. Pinned messages are your most powerful tool for maintaining a persistent reference layer. Use them for critical links: your official website, token contract address, support bot, and active announcements members ask about repeatedly.

For communities using Telegram Topics, organizing conversations by subject gives members a structured way to find relevant discussions. The easier it is to find answers independently, the fewer repetitive support requests your team fields.

Security and Scam Prevention Across Both Platforms

Security in Web3 communities is an ongoing operational responsibility, not a one-time setup. TRM Labs reported that approximately $35B in cryptocurrency flowed into fraud schemes globally in 2025, with scam proceeds often moving across wallets and chains within 24 to 48 hours. The most dangerous vectors are fake admin DMs, wallet drainer links disguised as airdrop claims, compromised announcement channels, and impersonation bots mimicking your support team.

On Discord, disabling direct messages from non-members, restricting public role mentions, and requiring server-level 2FA for moderators closes many common attack vectors. On Telegram, limiting who can add members and enabling admin approval for new joins stops most bot invasions before they start.

When a security incident does occur, communicate immediately and directly: confirm what happened, clarify what your team will and will not do (we will never ask for your seed phrase, we will never DM you first), and provide a single verified source of truth. Communities that respond fast and transparently recover trust far more quickly than those that go quiet.

Member education matters as much as technical controls. A community that regularly reinforces security norms builds a culture of vigilance. Scammers rely on members who don't know the rules. Publishing a short, pinned security FAQ on both platforms is a low-effort, high-impact move every Web3 project should implement from day one. Using a centralized support dashboard like Mava also reduces exposure to fake "support" DMs by giving members one verified, visible place to submit requests rather than relying on direct messages.

Onboarding New Members Without Overwhelming Them

First impressions in Web3 communities carry unusual weight. New members arrive with varying crypto literacy, and the gap between a veteran DeFi user and someone new to blockchain is enormous. A welcome experience calibrated only for experts alienates newcomers; one calibrated only for beginners frustrates your most active contributors.

The answer is layered onboarding. A welcome message should point members to three things: where to get help, where to find key resources, and where to introduce themselves. Role-gating content then gives members access to deeper channels as they progress. Automated welcome bots handle this without requiring a human moderator to respond to every new join, making someone's first ten minutes feel guided rather than overwhelming.

Measuring What Actually Matters in a Web3 Community

Vanity metrics like total member count tell you very little about community health. A server with 50,000 members and 200 daily active users is a ghost town. The numbers worth tracking include message activity relative to member count, support ticket resolution times (target a sub-2-hour SLA for common queries), member retention across 30 and 90-day windows, and satisfaction rates on resolved support requests.

Governance participation rates are particularly telling. A community where very few members vote on Snapshot proposals has an engagement problem that no channel restructuring will fix on its own. Regular pulse surveys and qualitative feedback from your most active members round out the picture in ways that quantitative dashboards alone cannot.

How Mava Unifies Your Discord and Telegram Community Operations

Managing Discord and Telegram simultaneously means handling two distinct platforms with different moderation tools, different bot ecosystems, and no native way to share context between them. A support request that starts in Telegram and continues in Discord lives in two separate silos. Without a unified view, your team either duplicates work or loses track of conversations entirely.

Mava is built specifically for this problem. Our shared inbox connects your Discord, Telegram, Slack, web chat, and email channels so your support team works from one place regardless of where a conversation started. Mava's AI support handles up to 50-60% of repetitive FAQs automatically across public channels and private tickets, covering 100+ languages and significantly reducing mod burnout on the questions that never stop coming: "when mainnet?", "how do I stake?", "where's my airdrop?". For complex issues, workflow tools including conversational forms, template answers, group ticketing, and automation rules keep nothing from falling through the cracks.

We work with 3,000+ communities and have processed 3.5M+ support tickets, including for projects like EigenLayer, Alchemy, Layer3, Fusionist, and Magic Square. Our pricing scales by support request volume rather than per seat, so adding agents as your team grows doesn't inflate your costs.

The practical result is a support operation that handles routine work at scale while freeing your human team to focus on what genuinely requires human judgment: relationships, governance, and the trust-building that no bot can replicate.